Welcome to issue 19 of the digest. To not bury the lede, here's the registration meetup for AI×UX NYC on May 17.

I'm getting bored of chat interface demos.

Don't get me wrong, the tech is impressive and all that. It’s just a low-bandwidth way of interacting with computers for many tasks.

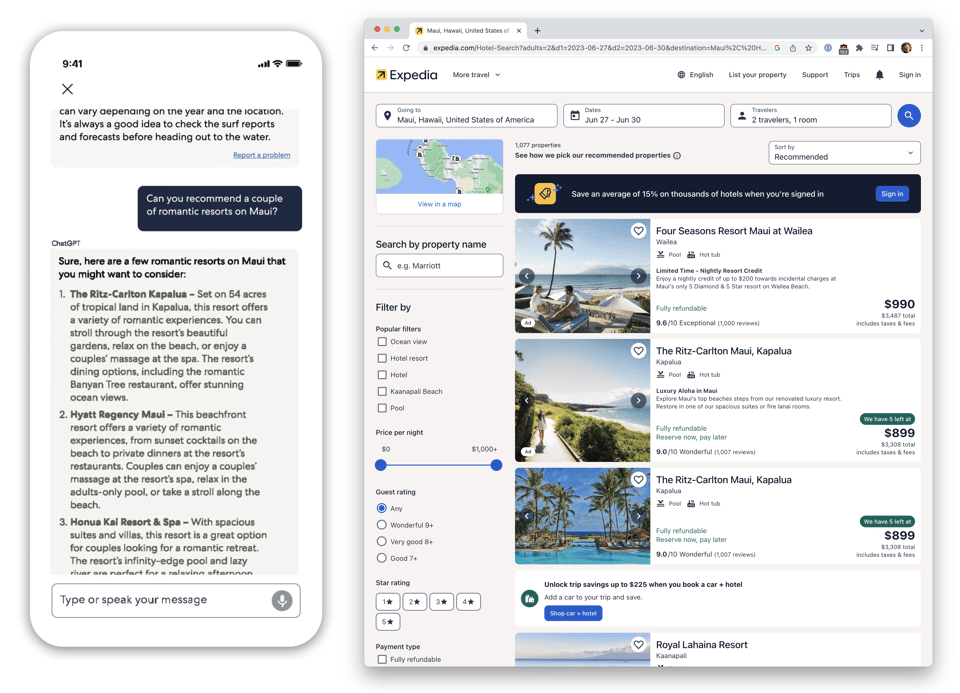

Compare the ChatGPT interface that Expedia recently launched to a search on Expedia proper:

Ultimately, booking a vacation is still a human-in-the-loop task: I want to have final say before a bot books a romantic vacation for me.

Chat inherently frames the interaction around the medium that the AI model understands best, not the most usable human interface.

What’s the point of all this AI if I have to bend to its needs, and not the other way around?

Aside: UI bandwidth

When talking about computer to computer communication, we often measure the bandwidth: the rate of information transmission. I find it useful to measure user interfaces in a similar vein: a high-bandwidth user interface is one that saturates the human’s ability to consume information from it.

One idea I've been playing with is using AI to subtly enrich a traditional UI.

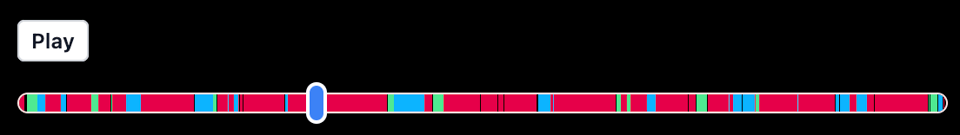

Compare the slider in a typical podcast player with this one. I made it by running a neural speaker diarization model over the audio, and then using different colors to indicate who is speaking at a given time:

It’s a subtle change that can be incorporated into an existing playback interface, but it ends up being a very useful way to jump around a podcast.

It uses the same number of pixels as a regular slider widget, but conveys additional information; it has a higher bandwidth.

AI×UX NYC

When I saw that our friends at latent.space (go subscribe!) were hosting an event on the topic of AI and UX in San Francisco, I felt the FOMO from not being there.

Finally, an AI event that wasn’t just chat demos!

Graciously, they gave us their blessing to host an NYC version of the event. It will happen on May 17th at Yext’s NYC office in Chelsea.

Registration is now open. If you're in NYC, I hope to see you there!

We’re also accepting demo pitches if you have a cool demo to pitch.

Until next time,

Paul

You just read issue #20 of Browsertech Digest. You can also browse the full archives of this newsletter.